What could be next for container orchestration?

Docker 1.12 is out in the wild! Hope you are having fun playing with the new orchestration features, breaking things in the process and comparing it to other orchestrators. Yet this is just the beginning of an era of more sophisticated systems to deploy and manage your containers.

There are many things we can improve upon to make distributed systems not only easier but more reliable for everyone. Expect to see many improvements over the next few years. As the state of the art on Distributed Systems evolves, orchestrators will provide with a much better experience in terms of bootstrapping, maintenance, reliability and security. Following are a few ideas and points that I think would be interesting to see happening from an infrastructure standpoint for average and very large scale clusters:

-

Super-stabilization / much better fault-tolerance, throw any node at any state, expect the whole system and services/tasks to be still up when an entire region goes down, the topology changes unexpectedly or you performed a wrong action on a cluster node because you were still distracted by last week's Game of Thrones episode. Desired state helps in that regard, but there are a few more details about disaster recovery that are not handled by current systems. For example losing a majority of nodes managing the cluster state (losing the quorum on systems using consensus based replication) will prevent the cluster from recovering without manual intervention as no action could be performed to migrate or reschedule containers.

-

Decentralized image management: Images should be shared in a peer to peer fashion with no single point of failure.

-

AI assisted and resource-aware orchestration and placement. The system should be able to place tasks using finer grained heuristics and should be able to "remember" the types of workload (network, memory or cpu intensive) that are the most scheduled on a given cluster to achieve optimal placement and resource utilization.

-

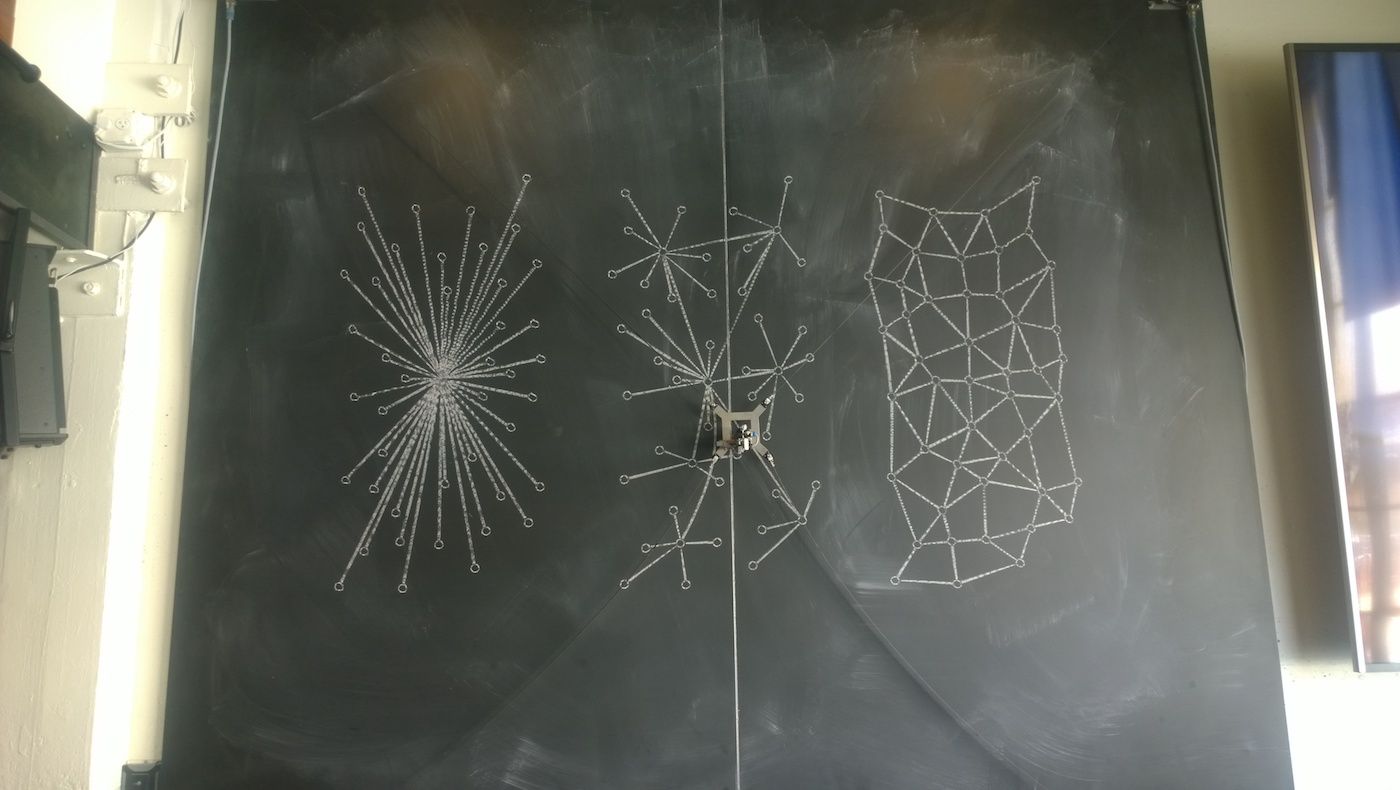

Coordination-free, leaderless protocol. A node is a node and then becomes whatever the system in place assigns it to do, it can still elect a leader or assign special roles to nodes to achieve specific tasks, but the protocol is fully distributed by nature as well as the state. Scheduling services goes through an Hybrid approach, a leader could be assigned for that task or everything goes through optimistic and distributed booking of slots with a probabilistic approach (peer to peer booking and slot management). This would ensure that machine failures are not hindering the placement of more tasks in the cluster when the leader goes down and can't be recovered. Cluster state in this case could be stale, but it ultimately converges and corrects mistakes and bad placements.

-

Predictive re-scheduling and service migrations: some machines are failing more often than others and the system should be able to predict that thanks to the cluster lifetime logs and datasets. It should also be able to perform workload migrations to scale down the cluster outside of service peak hours looking at the network traffic patterns.

-

Energy efficiency: the cluster manager is aware of the energy consumption of the cluster and the tasks running on top of it and tries to optimize the placement as well as perform migrations to reduce the overall energy consumption without interrupting the service.

I think the recurrent topics here are Fault Tolerance and Reliability, we can do much better to create a flexible system that could handle a whole new class of failures and human mistakes. Other seemingly unrelated technologies like AI could help a lot in that regard. Another topic is Ease of use, while current orchestrators are becoming easy to deploy and manage, we can still do better to reach to the point where you just download a binary and point to an existing machine and the system figures out what to do by itself. The Orchestrator becoming a transparent layer over the operating system to create a distributed OS.

abronan Newsletter

Join the newsletter to receive the latest updates in your inbox.